Topic: This post is about collecting and visualizing I/O latency histograms for NetApp filers in C-mode.

Motivations: The drill down of I/O latency is an important technique for troubleshooting and benchmarking storage. Average latency values can hide details of what is happening on the storage. Think for example of storage systems with flash and spindles, each serving I/O at different latency. Moreover averaging the measured values over time can hide details in case of varying workload or with issues that appear sporadically.

Latency heat maps: Representing latency histograms over time is a 3-D visualization problem and can be nicely solved using heat maps. This has been discussed in details by Brendan Gregg in ...

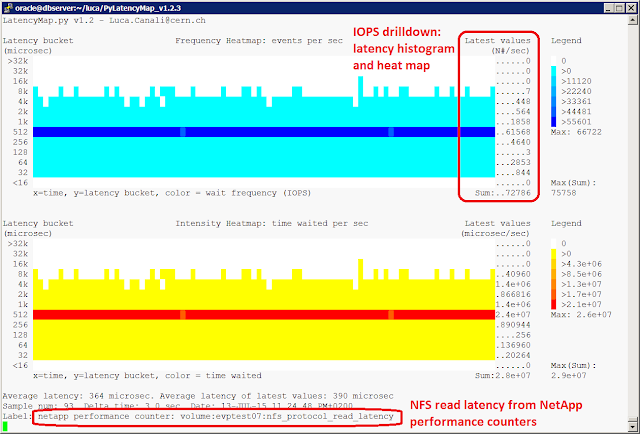

PyLatencyMap is a simple visualization tool that works on the command line and takes as input latency histograms and outputs two latency maps using ANSI codes for colors.

The latency heat map on the top of the screen uses a blue palette and represents the number of operations per second as a function of the latency bucket and time. The second map in yellow-red represents the time waited as a function of the latency bucket and time.

How to collect and display I/O latency histograms for NetApp filers

For a quick you can use Example10_NetApp_Cmode_reads.sh. Before running it you will need to edit the parameters: password to connect to the storage, the junction point you want to measure, the cluster management node and optionally the time interval between data points. Then run the example script. Similarly Example10b_NetApp_Cmode_writes.sh will capture and display heat maps for write operations if you prefer.

This is an example of the output

|

Figure1: Example output of PyLatencyMap measuring and displaying I/O read latency histograms using performance counters from the NetApp filer (C-mode). The top heat map (blue color) represents I/O operations latency over time. The lower heat map (yellow-red color) represents the time waited per bucket. |

Additional details

The 'glue' between NetApp and PyLatencyMap is provided by two Perl scripts written by Ruben Gaspar. The scripts are: (1) NetApp_histogram_Cmode.pl connects to the filer and gets the latency histograms (2) NetApp_latency_connector.pl transforms the histograms from the format of the NetApp filer into a format that is understood by PyLatencyMap (i.e. a power-of-two histograms).

Note that data aggregation in buckets is done by NetApp_latency_connector.pl using the same conventions as Oracle's wait event histograms (v$event_histogram and v$event_histogram_micro), that is a value of N for bucket B means that there have been 100 I/O operations with latency between B and B/2. For those of you who use DTrace 'quantize' or SystemTap 'hist_log', please note the difference as in those tools the convention is that a value of N for bucket B means N operations with latency between B and 2*B.

Note also the following dependencies: the scripts discussed here for the integration between NetApp performance counters and PyLatencyMap have been developed for NetApp Clustered Data ONTAP and in particular have been tested on version 8.2 an 8.3 (notably they will not work against OnTap version 7). Also Perl is needed including the modules Net::OpenSSH and IO::Pty. PyLatencyMap is written in Python.

Conclusions and additional links

PyLatencyMap is a tool for collecting and displaying latency heat maps on the command line intended as a aid for performance troubleshooting and benchmarking activities. PyLatencyMap has now been integrated with NetApp performance counters which adds to the existing data sources of Oracle wait events, SystemTap and DTrace probes among others.

Download PyLatencyMap from:

https://github.com/LucaCanali/PyLatencyMap or http://canali.web.cern.ch/canali/resources.htm

Previous blog entries on PyLatencyMap:

http://externaltable.blogspot.com/2013/09/getting-started-with-pylatencymap.html

http://externaltable.blogspot.com/2013/08/pylatencymap-performance-tool-to-drill.html